AI vendor due diligence for biotech executives: what to ask before you buy

Most biotech executives are receiving at least one AI vendor pitch every week. The tools range from genuinely useful to actively risky, and the marketing language is nearly identical across the spectrum. Very few executives have a structured framework for deciding which ones are worth the conversation, let alone the contract.

This article provides a practical four-question due diligence framework that surfaces the material risks before you commit.

Why AI vendor evaluation is different from standard software procurement

Standard software procurement evaluates functionality, integration, support, and commercial terms. AI vendor evaluation adds three additional risk dimensions that standard procurement processes are not designed to catch.

First, AI tools can behave in ways that are not fully predictable from their documentation. The model may perform well in the general case and poorly in your specific use case. Testing on your own data before signing is essential, not optional.

Second, AI tools in regulated biotech activities carry compliance implications that generic software does not. The FDA’s 2025 AI guidance and EMA lifecycle management updates both create documentation requirements for AI tool use in regulated processes under GxP and 21 CFR Part 11. A tool that does not support audit trail requirements cannot be used in those processes compliantly.

Third, AI vendor concentration risk is high. The current AI vendor market includes many well-funded startups with 12 to 24 months of runway. Signing a multi-year implementation contract with a company in that category is a business continuity risk.

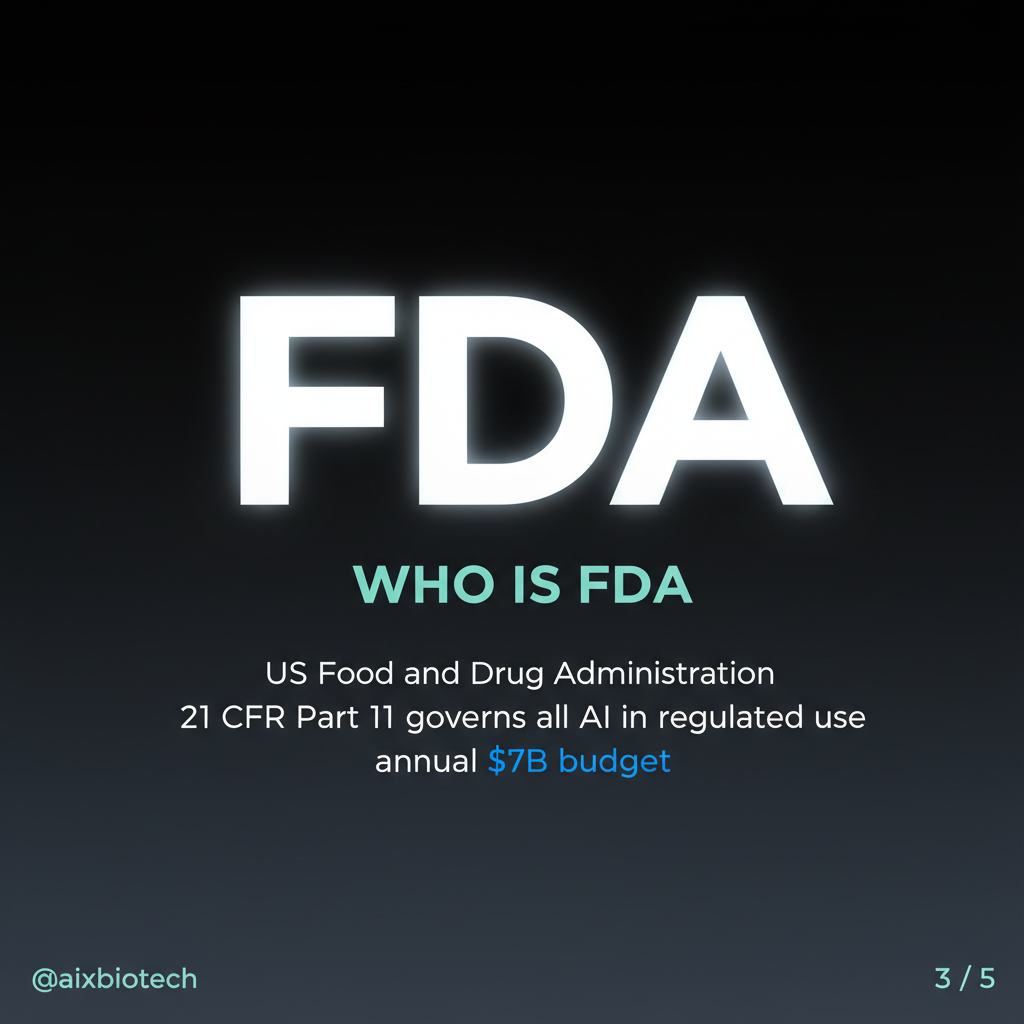

The four-question framework

Question 1: Where does my data go and who can access it?

Many AI tools feed input data back into shared training models. This means that queries you send, documents you upload, and outputs you generate may be used to retrain a model that other companies also use. For biotech companies handling commercially sensitive intelligence — pipeline data, pricing strategy, clinical programme details — this is a material data governance risk. Proprietary data, trade secrets, and anything touching IND or NDA submissions must never enter an external AI training pipeline.

Ask the vendor specifically: is your data used to train shared models? Is it processed within your regulatory jurisdiction? What are the data retention policies? Who at the vendor organisation has access to your data?

Question 2: How was the model validated and on what dataset?

A model trained on general pharma data may perform poorly on a rare disease context, an emerging market regulatory environment, or a speciality distribution scenario. Ask the vendor: what was the training dataset? What is the documented accuracy rate and error mode? Has the model been validated for your specific use case? Can you access a sandbox environment to test performance on your own data before committing?

Question 3: What documentation do you provide for regulatory audit trails?

The FDA and EMA now expect companies to document AI tool use in regulated activities. This includes the tool name, version, validation status, and the human review process applied to AI-generated outputs. If the vendor does not provide the documentation infrastructure to support this, you cannot use their tool in regulated activities compliantly.

Question 4: What is your runway and what happens if you are acquired?

AI startup mortality in healthcare is high. Ask the vendor: what is your current funding position and runway? What are your contractual obligations to customers in the event of acquisition or discontinuation? Is your data exportable in standard formats if you need to migrate?

Key stat: These four questions eliminate approximately 80% of the AI vendor pitches you will receive. The 20% that survive all four are worth a serious technical and commercial evaluation.

Our earlier analysis of AI as a personal competitive weapon for biotech professionals covers the individual adoption dimension. Vendor due diligence at the organisational level and individual AI capability building at the professional level are complementary strategies.

FAQ

Should you involve legal and compliance in every AI vendor evaluation?

Yes, if the intended use touches a regulated activity. For commercial or administrative uses where no regulated data is involved, a standard commercial review is sufficient. The compliance team should define which activities require their involvement before the first pitch arrives, not after.

How do you handle AI tools embedded in platforms you already use?

Many established platforms (Veeva, Salesforce, IQVIA) are embedding AI capabilities into tools you already have contracts with. The four-question framework still applies, but the data governance question is typically easier to answer because you already have a contractual relationship.

What is the right procurement process for a low-cost AI tool used informally?

Any tool that touches regulated data or regulated activities requires a formal procurement process regardless of cost. The compliance cost of an informal AI tool deployment that creates a data breach or audit finding is always higher than the cost of a proper procurement review.

These four questions will save you from most of the mistakes that biotech organisations are currently making with AI procurement.

Based on publicly available information. This analysis covers non-proprietary, publicly disclosed data only.