AI for Pharma and Biotech Office Workers: The Compliant Path Forward When IT Says No to Everything

AI in specialty drug demand forecasting is already reshaping commercial operations. AI in supply chain is cutting costs and reducing stockouts. AI in R&D is accelerating molecule screening. Everyone has seen the headlines. But ask the quality manager in Zug, the market access director in London, or the commercial operations lead in Boston what AI tools they actually use day to day, and the answer is usually the same. A locked-down version of Microsoft Copilot. Maybe. If IT approved it last quarter.

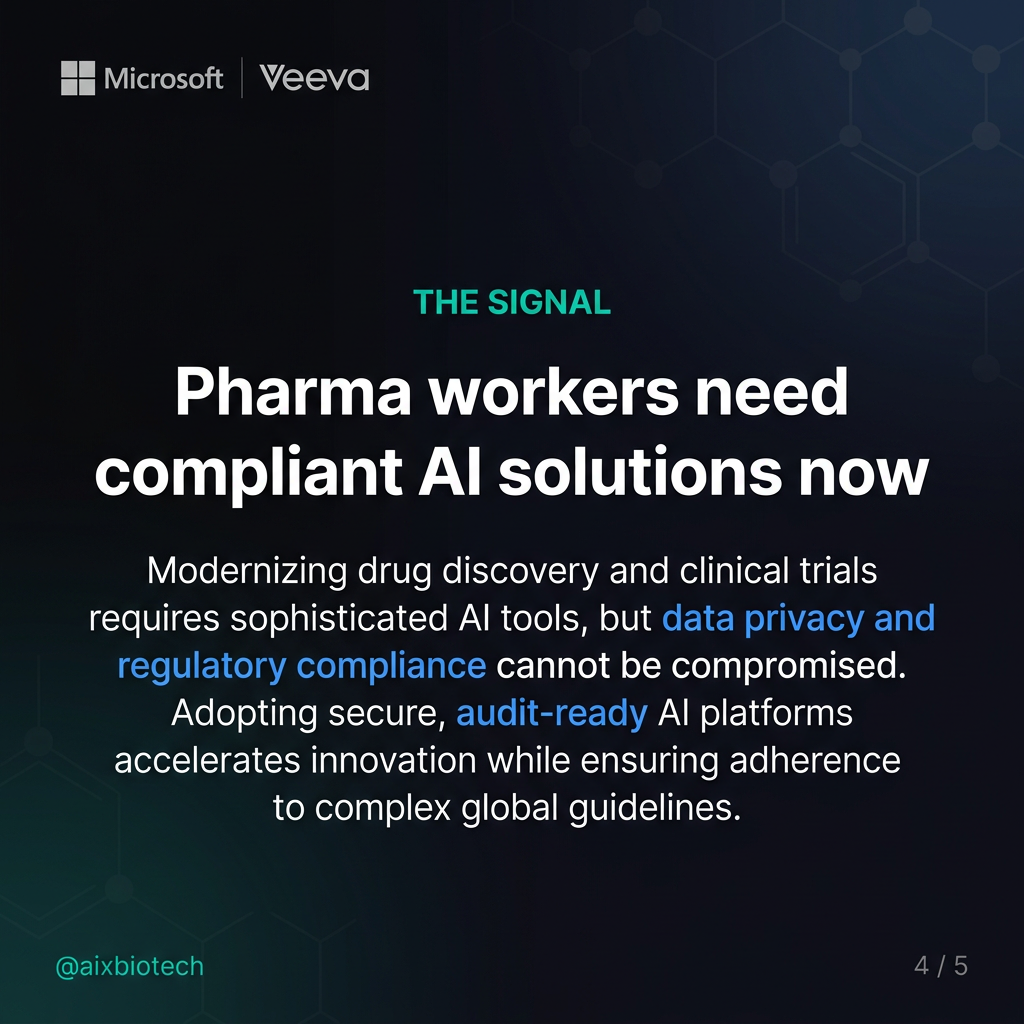

The gap between what AI can do for office-based pharma professionals and what they are actually allowed to use is one of the most underreported productivity problems in the industry right now. This article is about that gap, why it exists, what a realistic path forward looks like, and which tools are genuinely compliant for office use in regulated environments.

Why IT Locks Everything Down

The caution is not irrational. Pharma and biotech companies operate under some of the strictest data governance requirements in any industry. Patient data, clinical trial submissions, unpublished compound data, regulatory correspondence, and proprietary pricing models are all categories of information that must never enter a general-purpose AI tool hosted outside the company’s controlled environment.

The concern is real. Consumer versions of ChatGPT, Claude, and Gemini use input data to improve their models unless users explicitly opt out, and even then audit trails are inconsistent. A market access manager who pastes a payer negotiation strategy into a public AI chatbot has just potentially sent commercially sensitive information outside the company’s data perimeter. Legal, compliance, and IT teams are not wrong to be worried about this.

But the response in many pharma and biotech organisations has been disproportionate. The answer to a real risk became a blanket ban on all AI tools beyond one approved product. The result is a workforce that can see the productivity gains their peers in other industries are capturing and cannot access the same tools. McKinsey’s 2025 workplace AI report found that knowledge workers using AI tools effectively save between 1.5 and 2.5 hours per day on routine tasks. For a 500-person commercial and operations team, that is a significant competitive gap.

What Office Workers in Pharma Actually Need

The conversation about AI in pharma defaults quickly to R&D and manufacturing. But the office-based workforce in quality, regulatory, legal, supply chain, market access, commercial, and medical affairs is large, and the tasks they do every day are exactly the kind of work where AI generates measurable value.

- Quality teams spend significant time drafting SOPs, writing deviation reports, preparing CAPA documentation, and reviewing audit findings. These are document-intensive tasks that follow predictable structures and do not require access to patient data or regulated systems.

- Regulatory affairs teams monitor agency guidance, summarise competitor label changes, prepare variation submissions, and track global approval timelines. Much of this work draws on publicly available information or internal non-regulated documents.

- Legal and compliance teams review contracts, summarise case law, draft internal policies, and prepare briefing notes. As of October 2025, Anthropic reported that legal professionals send over 6 million queries per month to AI tools despite firm-level restrictions (Law.com, 2025). The demand is there regardless of the policy.

- Market access and pricing teams model reimbursement scenarios, summarise HTA guidance documents, and prepare value dossiers. Most of the inputs are published reports, not protected data.

- Supply chain and commercial operations teams write internal reports, prepare meeting summaries, build presentation decks, and analyse distributor performance data. Much of this work involves non-regulated internal documents.

None of these tasks require feeding patient records or clinical trial submissions into an AI tool. The work is document-heavy, repetitive in structure, and time-consuming. It is exactly where AI assistance pays off fastest.

The Real Risk Boundary

Before looking at compliant tools, it helps to be precise about where the actual data risk sits. Not all company information is equally sensitive, and blanket AI bans often fail to distinguish between categories.

The data that must never enter any AI tool without validated, controlled infrastructure includes: patient data of any kind (GDPR, HIPAA), clinical study reports and trial data before publication (21 CFR Part 11, ICH E6), IND, NDA, and MAA submission dossiers, unpublished compound or formulation data (trade secret, IP), and regulatory correspondence with agencies.

The data that is generally safe for use with enterprise-grade AI tools, subject to IT and legal sign-off, includes: publicly available scientific literature and agency guidance, internal non-regulated documents such as meeting notes and project plans, anonymised market research and commercial analytics, and published competitive intelligence from sources like Citeline, Evaluate, and IQVIA reports.

The path forward is not about removing restrictions. It is about building a clear, auditable framework that distinguishes between these categories and enables office workers to use AI on the safe side of that line.

Which Tools Are Genuinely Compliant

There are several enterprise-grade AI tools that pharma and biotech IT and compliance teams can evaluate with confidence. None of them are silver bullets, and all require proper configuration, data processing agreements, and internal sign-off before deployment. But they are materially different from consumer AI tools in how they handle data.

Microsoft 365 Copilot (Enterprise) is the most likely candidate for organisations already running on Microsoft infrastructure. In the enterprise configuration, data does not leave the company’s Microsoft 365 tenant. Prompts and responses are not used to train Microsoft’s models. Audit logs are available for compliance review. Microsoft 365 is formally mapped to 21 CFR Part 11 requirements (Microsoft Learn, 2025). A properly configured Microsoft 365 Copilot deployment is not the same product as a consumer AI chatbot. IT teams that have approved a closed Copilot version have already made the right foundational decision. The question is whether they have configured it to its full capability.

Veeva AI is becoming increasingly relevant for commercial, regulatory, and quality teams already operating on the Veeva Vault platform. Veeva launched its first AI agents in December 2025 for Vault CRM and PromoMats, with Safety and Quality agents planned for April 2026 and Regulatory agents for August 2026 (Veeva, 2026). Because Veeva AI operates within the Vault environment, the data governance model is the same as for the underlying Vault platform. For teams already using Veeva, this is a low-friction path to AI-assisted document generation, content review, and workflow automation without introducing new data perimeter risks.

Azure OpenAI Service allows organisations to deploy GPT-4 class models within their own Azure tenant. No data is used for model training. The deployment can be configured to meet GDPR requirements and supports audit logging compatible with regulated environments. This is more of an infrastructure option than a ready-to-use tool, but for organisations with internal IT capability it provides a compliant foundation for building purpose-specific AI assistants for quality, regulatory, or legal functions.

Microsoft Teams with Copilot for meetings is worth separating from the broader Copilot suite. Meeting transcription, summarisation, and action item capture within Teams with Copilot enabled stays within the Microsoft 365 tenant. For the quality manager who cannot use Otter.ai or Fireflies, this is the compliant equivalent. It requires the full Microsoft 365 Copilot licence but provides the meeting note functionality that most office workers are asking for.

Otter.ai Enterprise and Fireflies.ai Enterprise both achieved HIPAA compliance in 2025 (meetily.ai, 2025). However, pharma and biotech organisations should not assume HIPAA compliance translates directly to GxP readiness or 21 CFR Part 11 alignment. Both tools require detailed legal review of their data processing agreements before any deployment in a regulated environment. They may be appropriate for non-regulated meeting contexts. They should not be used for meetings involving clinical, regulatory, or IP-sensitive discussions without explicit legal clearance.

The Path Forward for Pharma IT and Leadership Teams

The current situation in many pharma organisations is unsustainable. Office workers who cannot access AI tools do not stop wanting to use them. Shadow AI, where employees use personal devices or personal accounts to access AI tools outside IT visibility, is a materially larger risk than a properly governed enterprise deployment.

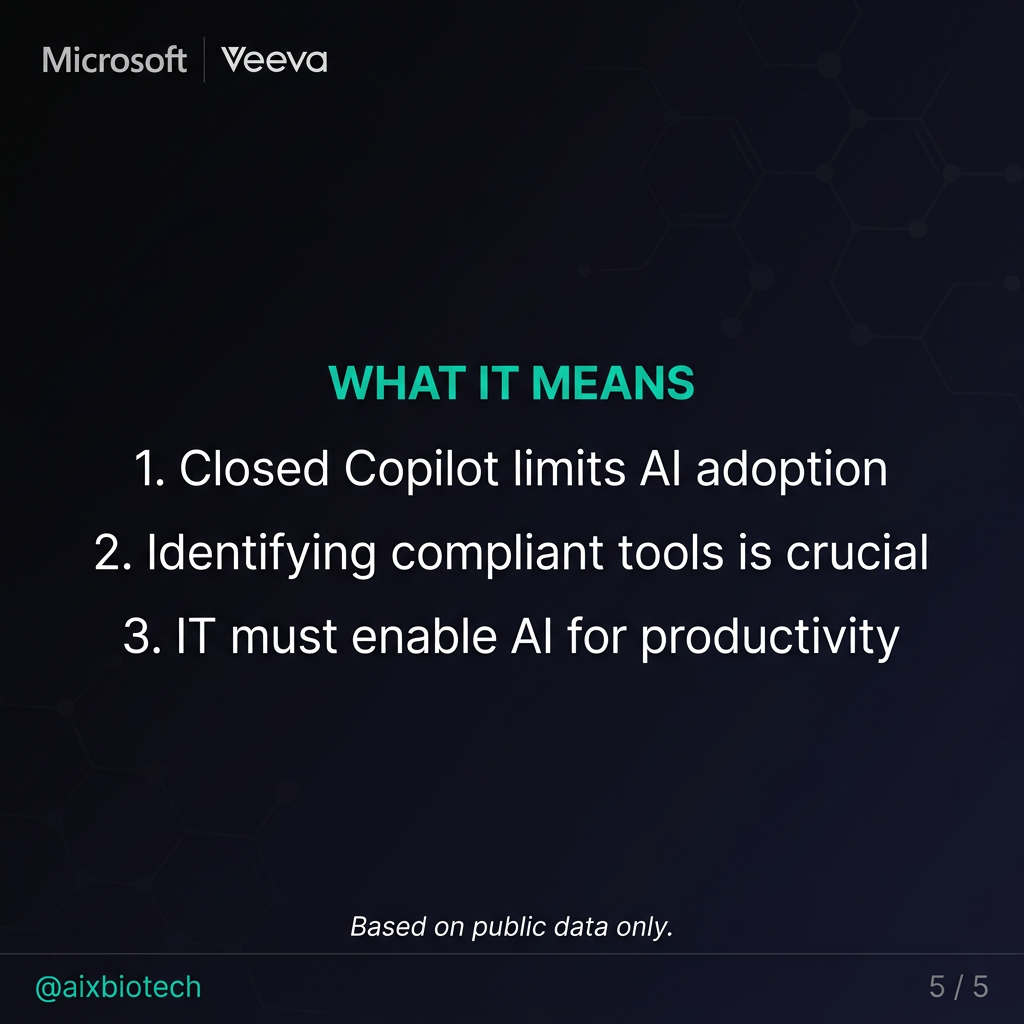

A realistic path forward has four components.

First, build a data classification framework. Define clearly which categories of information can be used with AI tools and which cannot. Make it simple enough that a commercial analyst can apply it without a legal review for every task.

Second, expand the approved tool set beyond a single product. A closed Copilot instance is a good start. It is not a complete AI strategy for an office workforce. Evaluate Veeva AI for teams on Vault. Evaluate Azure OpenAI for teams that need purpose-built assistants. Enable full Copilot for meetings within Teams for teams that need transcription and summarisation.

Third, invest in training. The risk of AI tools in a regulated environment is not primarily technical. It is human. Employees who understand what data is safe to use, how to prompt effectively, and how to review AI outputs critically are far less of a compliance risk than employees who have been blocked from tools and are finding workarounds on their own.

Fourth, establish a governance process for new tool evaluation. The AI tool landscape is moving faster than annual IT review cycles. A standing cross-functional committee including IT, legal, compliance, and business representatives can evaluate new tools against a defined framework without creating a bottleneck that leaves the workforce years behind the market.

For context on how AI is already delivering measurable results across biotech operations, see how AI is closing the $356 billion distributor margin gap in biotech and how AI is cutting supply chain costs across biotech.

Key Takeaway

The risk of AI tools in pharma office environments is real but manageable. The cost of doing nothing is not zero. Office workers in quality, regulatory, legal, supply chain, market access, and commercial roles are operating at a measurable productivity disadvantage. The answer is not a blanket ban and it is not unrestricted access. It is a clear data classification framework, an expanded set of enterprise-grade compliant tools, and the training to use them responsibly.

Frequently Asked Questions

Is Microsoft 365 Copilot compliant for use in pharma?

In its enterprise configuration, Microsoft 365 Copilot keeps data within the organisation’s Microsoft 365 tenant and does not use customer data to train its models. Microsoft has formally mapped its enterprise cloud services to 21 CFR Part 11 requirements. Organisations should still conduct their own validation assessment and obtain legal and compliance sign-off before deployment in regulated workflows.

Can AI tools be used for meeting notes in a pharma company?

Yes, within a properly configured environment. Microsoft Teams with Copilot for meetings provides transcription and summarisation that stays within the Microsoft 365 tenant. External tools like Otter.ai Enterprise and Fireflies.ai Enterprise require legal review of data processing agreements before use in regulated contexts.

What is shadow AI and why is it a risk in pharma?

Shadow AI refers to employees using AI tools through personal accounts or devices outside IT visibility and governance. The risk is that sensitive company information may be processed by external AI systems without audit trails or data processing agreements. A governed enterprise AI deployment reduces shadow AI risk by giving employees a compliant alternative.

What data can pharma office workers safely use with AI tools?

Published scientific literature, publicly available agency guidance, anonymised market research, internal non-regulated documents such as meeting notes and project plans, and published competitive intelligence are generally appropriate with enterprise-grade AI tools, subject to IT and legal approval. Patient data, clinical study reports, IND/NDA dossiers, unpublished compound data, and regulatory correspondence must not enter AI tools without validated, controlled infrastructure.

Based on publicly available information. This analysis covers non-proprietary, publicly disclosed data only. Tool compliance assessments reference publicly available vendor documentation and regulatory framework guidance.